- Blog

- Bsnetblocker

- Eviews autocorrelation

- Scanmaster scanner

- Huawei mobile partner for windows 10 india

- Webroot antivirus download

- How to download crazy frog racer 2

- Prodiscover basic report compared to ftk demo report

- Minecraft godzilla mod models

- Pure edge viewr

- Neverwinter nights platinum install disc

- Fibbage enough about you how 2 players will not start

- Advanced systemcare 12-2 pro

- Playgirl magazine man of the year

- Outkast stankonia cd

- Air strike 3d operation wat

The fix is to either include the missing variables, or explicitly model the autocorrelation (e.g., using an ARIMA model). For example, if a weather model is wrong in one suburb, it will likely be wrong in the same way in a neighboring suburb. Where the data has been collected across space or time, and the model does not explicitly account for this, autocorrelation is likely. A cause is that some key variable or variables are missing from the model. When autocorrelation is detected in the residuals from a model, it suggests that the model is misspecified (i.e., in some sense wrong). This test only explicitly tests first order correlation, but in practice it tends to detect most common forms of autocorrelation as most forms of autocorrelation exhibit some degree of first order correlation. The standard test for this is the Durbin-Watson test. Sampling error alone means that we will typically see some autocorrelation in any data set, so a statistical test is required to rule out the possibility that sampling error is causing the autocorrelation. We can also see that we have negative correlations when the points are 3, 4, and 5 apart. At a lag of 1, the correlation is shown as being around 0.5 (this is different to the correlation computed above, as the correlogram uses a slightly different formula). We can see in this plot that at lag 0, the correlation is 1, as the data is correlated with itself. The lag refers to the order of correlation.

autocorrelation which is a function of the lag values. The correlogram is for the data shown above. Index (HCPI) has been produced for Sierra Leone and with EVIEWS making use of best model selection.

Eviews autocorrelation series#

ĭiagnosing autocorrelation using a correlogramĪ correlogram shows the correlation of a series of data with itself it is also known as an autocorrelation plot and an ACF plot. With negative first-order correlation, the points form a zigzag pattern if connected, as shown on the right. When data exhibiting positive first-order correlation is plotted, the points appear in a smooth snake-like curve, as on the left. The example above shows positive first-order autocorrelation, where first order indicates that observations that are one apart are correlated, and positive means that the correlation between the observations is positive. This means that the data is correlated with itself (i.e., we have autocorrelation/serial correlation). When we correlate these two columns of data, excluding the last observation that has missing values, the correlation is 0.64. The column to the right shows the last eight of these values, moved “up” one row, with the first value deleted. The auto part of autocorrelation is from the Greek word for self, and autocorrelation means data that is correlated with itself, as opposed to being correlated with some other data. Autocorrelation is diagnosed using a correlogram ( ACF plot) and can be tested using the Durbin-Watson test. The existence of autocorrelation in the residuals of a model is a sign that the model may be unsound. One way to investigate the possible existence of such correlation is to obtain the least squares residuals e t and to check whether the sample correlations between e t, and e t 1, e t 2 are significantly different from zero.

Eviews autocorrelation serial#

This is also known as serial correlation and serial dependence. Autocorrelation exists when the equation error e t is correlated with any of its past values e t 1,e t 2. When you have a series of numbers, and there is a pattern such that values in the series can be predicted based on preceding values in the series, the series of numbers is said to exhibit autocorrelation.

Eviews autocorrelation how to#

This post explains what autocorrelation is, types of autocorrelation - positive and negative autocorrelation, as well as how to diagnose and test for auto correlation.

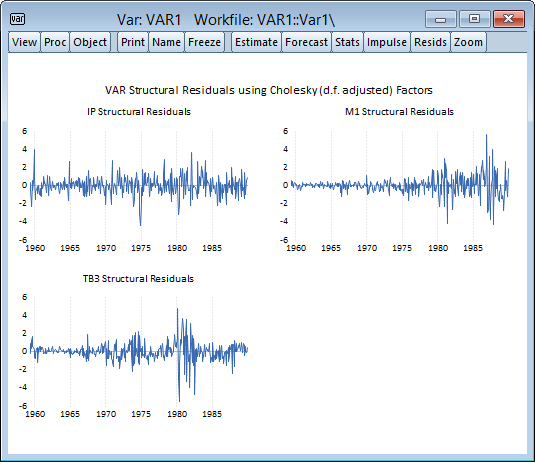

But the way EViews manual's description looks like LM test under VAR is not a joint hypotheses test.Auto correlation is a characteristic of data which shows the degree of similarity between the values of the same variables over successive time intervals. So I have a panel data with serial autocorrelation and heteroskedasticity and now I have no idea what model would solve this problem and what command I can use. Now my VAR has autocorrelation or not? According to my knowledge (i dont have much technical knowledge), standard LM test is a joint test. Then the result of LM test under VAR with 2 lags is lags-LM-stat-Prob Oh! auto-correlation in lag 1! So, I rerun VAR with 1 more lag. This question is about how to interpret LM test in VAR.įor example, under VAR with lag 1, the LM test shows lags-LM-stat-Prob